Every agent needs a brain. On Abundly, you can choose which large language model (LLM) powers your agent—or simply let the platform pick the best one for you.Documentation Index

Fetch the complete documentation index at: https://docs.abundly.ai/llms.txt

Use this file to discover all available pages before exploring further.

Default behavior

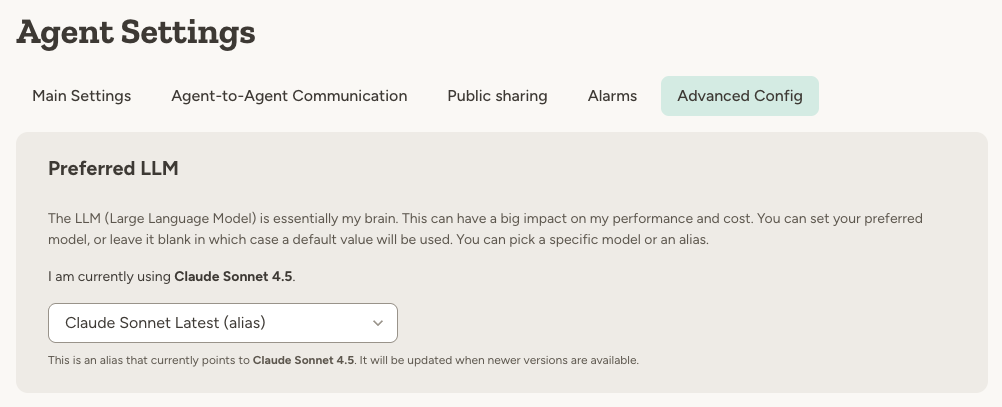

If you don’t have a preference, leave the model set to (no preference). This is the default, and it means Abundly will use whatever model we think works best for general agentic behavior. We continuously evaluate models and update this default as better options become available. This is the right choice for most users—you get great performance without having to think about model selection at all.Model aliases vs specific versions

When selecting a model, you can choose between:- Aliases like “Claude Sonnet Latest” — automatically points to the latest version that we have verified works well

- Specific versions like “Claude Sonnet 4.5” — locked to that exact version

Available models

Model availability is workspace-specific. Your admin config determines which model families appear in your picker, and aliases may be added for easier upgrades. Most workspaces use a mix of:- Anthropic Claude

- OpenAI GPT

- Google Gemini

- xAI Grok

Claude

Anthropic’s models, known for nuanced reasoning and safety

GPT

OpenAI’s models, versatile and widely capable

Gemini

Google’s models, strong at knowledge tasks and multimodal understanding

Comparing models

Not sure which model to choose? We tend to default to the Claude models (Sonnet or Opus). We find they work very well for agentic behaviour. But the other models have improved a lot lately, and some have specific strenghts that you may want to leverage. This is a changing landscape, so you’re probably best off researching online yourself (or asking an Agent to do it for you…). But here’s a high level overview of how the models from each provider compare against each other.Anthropic Claude models

| Model | Best for | Speed | Cost | Notes |

|---|---|---|---|---|

| Claude Opus 4.6 | Complex reasoning, high-stakes tasks | Moderate | $$ | Most capable Claude model. Best for difficult problems that defeat Sonnet. Opus 4.7 is also available as an explicit model choice. |

| Claude Sonnet 4.6 | Everyday work, coding, analysis | Fast | $ | Recommended default. Excellent balance of capability and speed. |

| Claude Haiku 4.5 | High-volume, speed-critical tasks | Fastest | $ | ~3x faster than Sonnet at 1/3 the cost. Near-Sonnet quality for most tasks. |

Google Gemini models

| Model | Best for | Speed | Cost | Notes |

|---|---|---|---|---|

| Gemini Pro 3 | Maximum reasoning depth | Moderate | $$$ | Strongest reasoning. 1M token context for huge documents. |

| Gemini Flash 2.5 | Balanced performance | Fast | $$ | Good multimodal capabilities. Native audio support. |

| Gemini Flash Lite 2.5 | High-volume processing | Fastest | $ | Most cost-effective. Great for bulk summarization and routing. |

OpenAI GPT models

| Model | Best for | Speed | Cost | Notes |

|---|---|---|---|---|

| GPT 5.2 Pro | Mission-critical, complex reasoning | Moderate | $$$$ | Extended reasoning for highest accuracy. Best for high-stakes decisions. |

| GPT 5.2 | Latest improvements, reduced hallucinations | Fast | $$$ | 30% fewer hallucinations. Strong on coding (55.6% SWE-Bench Pro) and reasoning. |

| GPT 5 Mini | Well-defined tasks, interactive apps | Faster | $$ | Great balance of speed and capability. Ideal for chat and coding assistants. |

| GPT 5 Nano | High-volume, simple tasks | Fastest | $ | Ultra-cheap. Best for summarization, classification, and bulk processing. |

xAI Grok models

| Model family | Best for | Speed | Cost | Notes |

|---|---|---|---|---|

| Grok | Fast agentic workflows and tool-heavy tasks | Fast | $$ | Available when your workspace has xAI configured. Some Grok variants use integrated reasoning and may enforce model-specific thinking behavior. |

Per-context model selection

Different contexts in your agent’s life may benefit from different models. You can configure a model and thinking preference per context:- Chat — Conversations you start with the agent

- Scheduled tasks — When the agent runs on a schedule

- Email — When the agent responds to incoming emails

- Slack — When the agent responds to Slack messages

- Microsoft Teams — When the agent responds to Teams messages

- Agent messages — When other agents send your agent a message

Per-task model overrides

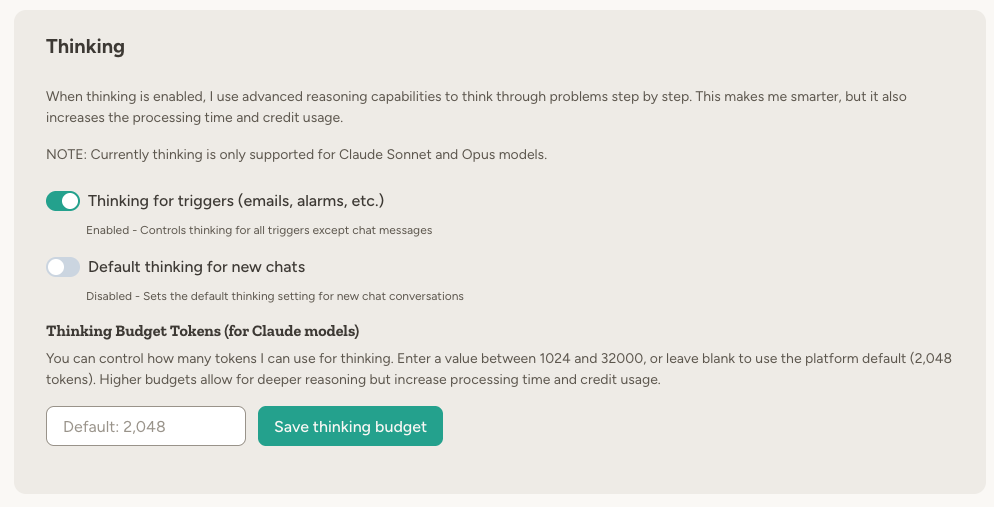

Individual scheduled tasks can have their own model and thinking setting on top of the per-context default. Open a task’s settings card to configure it. This is useful when one particular task needs heavier reasoning than your other scheduled tasks.Thinking mode

Many models support a thinking mode where the model spends more effort reasoning before it responds.

Thinking behavior depends on the selected model:

- Supports thinking — You can toggle thinking on or off

- Requires thinking — Thinking is forced on

- Does not support thinking — The thinking toggle is hidden

When to change models

| Scenario | Recommendation |

|---|---|

| Just getting started | Leave it on “(no preference)“ |

| Complex reasoning, planning | Claude Opus, Gemini Pro, or GPT 5.2 Pro |

| Everyday tasks, coding | Claude Sonnet, GPT 5.2, or GPT 5 Mini |

| High-volume, simple tasks | Claude Haiku, Gemini Flash Lite, or GPT 5 Nano |

| Speed is critical | Claude Haiku, Gemini Flash, or GPT 5 Nano |

| Lowest hallucination rates | GPT 5.2 or GPT 5.2 Pro |

| Working with long documents | Gemini Pro (1M token context) |

Unified interface

Regardless of which model you choose, the platform provides a consistent experience:- Switch models even mid-conversation

- Same capabilities work across all models

- Consistent behavior and tool usage

- No need to learn different APIs

What if I pick a model that doesn't work well for my use case?

What if I pick a model that doesn't work well for my use case?

You can switch models at any time. If you’re not happy with the results, try a different model—your agent’s instructions and capabilities stay the same.

How does pricing work for different models?

How does pricing work for different models?

Each model has different credit costs per token. More capable models like Opus and GPT 5.2 cost more per request, while Haiku and Flash Lite are very cost-effective. Check your usage dashboard to monitor credit consumption.

Can I use different models for different agents?

Can I use different models for different agents?

Yes. Each agent can have its own model preference. You might use a fast, cheap model for a high-volume support agent and a powerful reasoning model for a research agent.

Can I use different models for different contexts within the same agent?

Can I use different models for different contexts within the same agent?

Yes. You can set a model per context (chat, scheduled tasks, email, Slack, agent messages) and even override the model on individual scheduled tasks. This lets you optimize cost and capability for each type of work the agent does.

Learn more

Anthropic (Claude)

Learn about Claude models and capabilities

OpenAI (GPT)

Learn about GPT models and capabilities

Google Gemini

Learn about Gemini models and capabilities